PortableGL

Crowdfunding Announcement

I'm launching a crowdfunding campaign soon for a PortableGL-based shadertoy application. If you'd like to support PortableGL development or are interested in shader art (or ideally both) check it out here.

"Because of the nature of Moore's law, anything that an extremely clever graphics programmer can do at one point can be replicated by a merely competent programmer some number of years later." -John Carmack

In a nutshell, PortableGL is an implementation of OpenGL 3.x core (mostly; see GL Version) in clean C99 as a single header library (in the style of the stb libraries). This means it compiles cleanly as C++ and can be easily added to almost any codebase.

It can theoretically be used with anything that takes a 32 or 16 bit framebuffer/texture as input in any format. (including just writing images to disk manually or using something like stb_image_write). That should mean it supports almost everything, barring performance issues.

Almost all the demos use SDL2 except the programs in the backends directory which show how to use it with other backends (currently x11/xlib and win32).

It supports arbitrary 32- and 16-bit color buffer formats (selected at compile time) with several common ones ready to use out of the box. See the documentation for more details.

Its goals are, roughly in order of priority,

- Portability

- Matching the API within reason, at the least matching features/abilities

- Ease of Use

- Straightforward code

- Speed

Obviously there are trade-offs between several of those. An example where 4 trumps 2 (and arguably 3) is with shaders. Rather than write or include a GLSL parser and have a built in compiler or interpreter, shaders are special C/C++ functions that match a specific prototype. Uniforms are another example where 3 and 4 beat 2 because it made no sense to match the API because we can do things so much simpler by passing a pointer to a user defined struct (see the examples).

Download

Get the source from Github.

You can also install it via Homebrew.

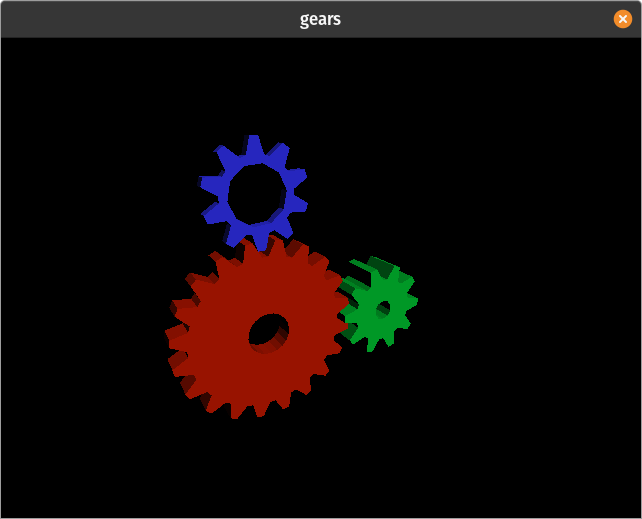

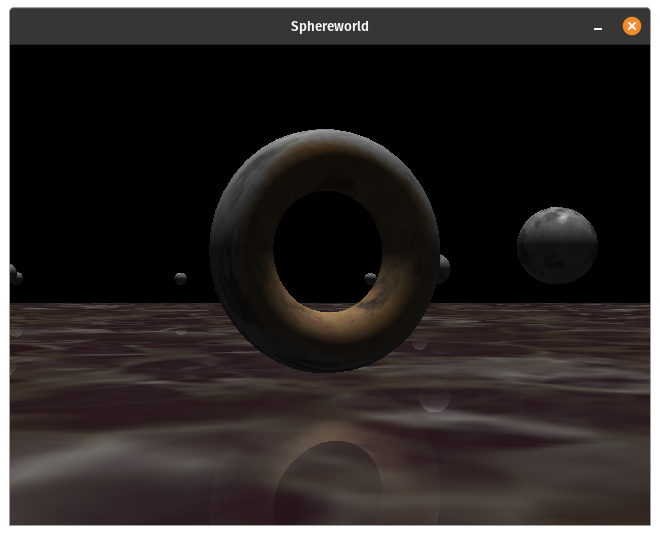

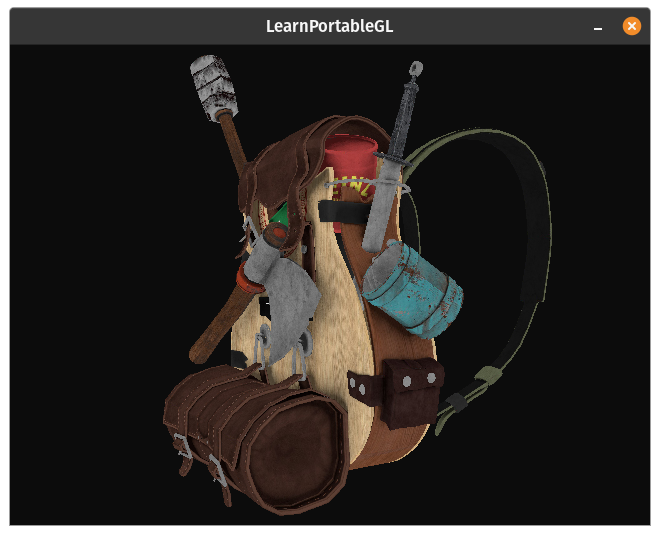

Gallery

The last is a PortableGL port of Michael Fogleman's Craft.

Directory Structure

demos: Unpolished open ended programs demonstrating a wide variety of featuresexamples: More polished examples in C and C++, some graduating from demosoriginal: Original custom examples, C++ programs use rsw_math rather than glmclassic: Ports of classic OpenGL programs/demos, currently just gearswebgl_lessons: Ports of lessons from learningwebgl.com based off my ports to OpenGL 3.3 here

backends: "hello triangle" using backends other than SDL2 (win32 and xlib currently)glcommon: Collection of helper libraries I use for graphics programmingmedia: Parent directory for external resourcesmodels: Models in my own simplified text format (created withdemos/assimp_convert)screenshots: screenshots of demos and external programstextures: All textures used in any program in the repo

src: Contains the actual source files ofportablegl.hwhich are amalgamated withgenerate_gl_h.pytesting: Contains a more formal regression and performance test suiteexpected_output: The expected output frames for the regression tests (run_tests)test_output: The output of the regression tests (see Building section)

portablegl.h: Current dev version of PortableGL

While I try not to introduce bugs, they do occasionally slip in, as well as (rarely) breaking changes. At some point I'll move to more frequent point releases for fixes and non-breaking changes and be more consistent with semantic versioning.

Documentation

There is the documentation including a minimal program in the comments at the top of the file but there is currently no formal documentation.

The best way to learn is to look at the examples (and demos) and comparing them to equivalent OpenGL 3.3+ programs.

My ports of the learnopengl.com tutorial code here are the best resource, combining his tutorials explaining the OpenGL aspects and my comments in the ported code explaining PortableGL's differences and limitations (at least in the first time they appear).

For the original examples and demos you can compare with my equivalent programs in opengl_reference).

Honestly, the official OpenGL docs and reference pages are good for 90-95% of it as far as basic usage:

4.6 Core reference 4.5 comprehensive reference tutorials and guides

Building

There are no dependencies for PortableGL itself, other than a compliant C99/C++ compiler.

If you just want to do a quick test that it compiles and runs:

cd testing

make run_tests

...

./run_tests

All tests passed

See the testing README for more on the formal testing.

See the examples README which describes how to get SDL2 if you don't already have it and how to use make to build them.

To sum up, the only thing that is guaranteed to build and run anywhere out of the box with no extra effort on your part are the regression tests since they don't depend on anything except a compliant C++ compiler.

Modifying

portablegl.h is generated in the src subdirectory with the python script

generate_gl_h.py. You can see how it's put together and either modify the script to

leave out or add files, or actually edit any of the code. Make sure if you add any actual

gl functions that you add them to gl_function_list.c as it's used in the script for

optionally wrapping all of them in a macro to allow user defined prefix/namespacing.

Additionally, there is a growing set of more formal tests in testing, one set of

regression/feature tests, and one for performance. If you make any changes to core

algorithms or data structures, you should definitely run those and make

sure nothing broke or got drastically slower. The examples and demos can also function as

performance tests, so if one of those would be especially affected by a change, it

might be worth comparing its before/after performance too.

On the other hand, if you're adding a function or feature that doesn't really affect anything else, it might be worth adding your own test if applicable. You can see how they work from looking at the code, but I'll add more details and documentation about the testing system later when it's more mature.

Bindings/Ports

portablegl-rs is a Rust port created with the assistance of AI (Anthropic's Claude Opus 4.6).

pgl is a Go port using CXGO, and hand translating the individual examples/demos.

Sponsors

You can help support PortableGL development by becoming a Github Sponsor or via one of the other methods shown/linked to in the Sponsor popup.

Past Aeronix Sep-Oct 2023

LICENSE

PortableGL is licensed under the MIT License (MIT)

The code used for clipping is copyright (c) Fabrice Bellard from TinyGL also under the MIT License, see LICENSE.

History

PortableGL started as a very simple wireframe software renderer based on a tutorial in summer 2011. I kept playing with it, adding minor features over the next year, until in early 2013 I decided I should turn it into a software implementation of OpenGL. This would save me a huge amount of time and energy on API design since I'd just be implementing an existing good API (though some disagree) and also make the project more useful both to me and potentially others. Also, at the time Mesa3D was still years away from full 3.x support, not that I'm really competing, and the fact that there was no finished implementation was a little motivating. I made a lot of progress that year and had a few bursts here and there since, but once I got it mostly working, I was less motivated and when I did work on it I spent my time on creating new demos/examples and tweaking or fixing minor things. I could have released an MVP back in 2014 at the earliest but late 2016 would have been the best compromise. Anyway, after several thousand hours spread out over more than 10 years, it is as you see it today. Software is never finished, and I'll be the first to admit PortableGL could use more polish.

Why

Aside from the fact that I just wrote it for fun and because I thought it was cool (maybe others will too), I can think of a few semi-practical purposes.

Educational

I took a 400 level 3D Graphics course in college in fall 2010 months after OpenGL 3.3/4.0 was released. It was taught based on the original Red Book using OpenGL 1.1. Fortunately, the professor let me use OpenGL 3.3 as long as I met the assignment requirements. Sadly, college graphics professors still teach 1.x and 2.x OpenGL today in 2022 more commonly than 3.x/4.x (or Vulkan). A few are using WebGL 2.0 which I kind of consider 1 step forward, 2 steps back.

While Vulkan is the newest thing (already 5 years old time flies), it really is overkill for learning 3D graphics. There is rarely anything that students make in your standard intro to 3D graphics that remotely stresses the performance of any laptop built in the last decade plus. Using modern OpenGL (ie 3.3+ core profile) to introduce all the standard concepts, vertices, triangles, textures, shaders, fragments/pixels, the transformation pipeline etc. first is much better than trying to teach them Vulkan and graphics at the same time, and obviously better than teaching OpenGL API's that are decades old.

PortableGL could be a very convenient base for such a class. It's easy to walk through the code and see the pipeline and how all the steps flow together. For more advanced classes or graduate students in a shared class, modifying PortableGL in some way would be a good project. It could be some optimization or algorithm, maybe a new feature. Theoretically it could be used as starter code for actual research into new graphics algorithms or techniques because it's such a convenient small foundation to change and share, vs trying to modify a project the size and complexity of Mesa3D or create a software renderer from scratch.

Special Cases

It's hard to imagine any hardware today that has a CPU capable of running software rendered 3D graphics at any respectable speed (especially with full IEEE floating point) that doesn't also have some kind of dedicated GPU. The GPU might only support OpenGL 2.0 give or take but for performance it'd be better to stick to whatever the hardware supported than use PortableGL. However, theoretically, there could be some platform somewhere where the CPU is relatively powerful that doesn't have a GPU. Maybe some of the current and future RISC SoC's for example? In such a case PortableGL might be a useful alternative to Mesa3D or similar.

Another special case is hobby OS's. The hardware they run on might have a GPU but it might be impossible or more trouble than it's worth to get Mesa3D to run on some systems. If they have a C99 compliant compiler and standard library, they could use PortableGL to get at least some OpenGL-ish 3D support.

Why C

I recently came across a comment regarding PortableGL that essentially asked, "why implement a dead technology in a dying language?"

While I would argue that OpenGL is far from dead and C isn't even close to dying, there are many good reasons to write a library in C.

Here are a few libraries written in C, along with some links to their reasoning:

- SQLite The most deployed database in the world.

- raylib One of the most starred and active repos, and among the most popular OSS game engines.

- Lua The very popular language used in tools like premake, game engines like Love, and best selling games like Balatro.

- Chipmunk Physics

- SDL The cross platform library used in 10000's of games and apps, including most of my own demos.

- GLFW A popular OpenGL/Vulkan specific cross-platform library.

- stb The OG single header libraries like stb_image. His own answers to why C and why single-headers

- Sokol. He has a whole series of blog posts

- miniaudio

- many many more...

If you read through any of those you may have noticed a pattern. Choosing to write a library in the C++-clean subset of C gives you automatic C/C++ support, the most portability across platforms, the easiest integration into other projects, and the easiest bindings to other languages. Keeping the library small with few or no dependencies only enhances all those benefits and makes it even easier to use.

All that said, if I were ever going to actually write a real/large 3D application or game I would probably use C++ for the benefits like operator overloading, just like I do with the majority of the demos. Choosing a language for a large user level application is an entirely different animal from choosing one for a library. On the other hand, there are still reasons to use C, including objective reasons like compilation time and binary size, but most are more subjective/personal. On the other other hand, I don't think the "It Runs Doom" meme would exist if it were written in C++ and who doesn't want their application to run on toasters and oscilloscopes 3 decades after it was released?

Lastly, I just like C. It was my first language and is still my favorite for a host of reasons. Hey, if it's good enough for Bellard, it's certainly good enough for me.

What PortableGL Is Not

It is not a drop in replacement for libGL the way Mesa and some other software rendering libraries are. Porting a real OpenGL program to PGL will

require some code changes, though depending on the program that could be as little as a couple dozen lines or so. In many cases the biggest changes

required have nothing to do with PGL vs OpenGL directly, but having to change the windowing system. If you want to use PGL for full window software rendering

you need a system that supports blitting raw pixels to the screen. Libraries like GLFW which are designed to be used with real OpenGL do not have that capability

because everything is done through the OpenGL context. There is some talk of adding some kind of

support for real software rendering but I don't see it going anywhere because it just doesn't make sense

for GLFW's goals. SDL is great but it is a rather large dependency that links dozens of external libraries. So what else is out there?

I've seen many lighter windowing/input libraries out there that wrap platform specific toolkits that would work, most recently

RGFW which recently added a PGL example.

There are also, of course lower level/platform specific backends like win32 and X11's xlib which I now have examples for in the backends directory.

See the backends README for more details.

GL Version

You may have noticed that I link to OpenGL 4 references above, even though I describe PortableGL as "3.x-ish". There is a good reason for that.

When I first started PortableGL I originally wanted to target OpenGL 3.3 Core profile since that's what I knew, and for the history of this project I've described it as 3.x-ish core, but that's not entirely accurate. While I don't include any of the old fixed function stuff (no glBegin/glEnd or anything that goes with them), right away I found that I supported some things from the compatibility profile (like a default VAO) for free. Later I realized there was no reason not to add the 4.x DSA functions which are also simple to implement as everything is in RAM anyway. Mapping buffers is free for the same reason, and textures too (see pgl_ext.c).

In late 2023 I was working with OpenGL ES 2. I'd worked with it before but in the past it seemed

so similar to what I already knew, I mostly skimmed the book, assuming most differences were just fewer formats

and smaller limits. Obviously that's not quite true. In digging deeper, I learned about "client arrays" and they explain

why the last parameter to VertexAttribPointer is GLVoid* pointer and not GLsizei offset.

Of course the name should have given it away too. Turned out even OpenGL 3.3 (compatibility) and ES 3.0 still

support client arrays, as long as the current VAO is 0. So now I technically match their spec but as a software

renderer, there's really no downside to using client arrays if you prefer that. You can easily change

the if statement.

And as of mid-2024 I just added support for the very useful GL debug output

from OpenGL 4.3. It doesn't support everything because most is overkill/unecessary for PGL so far but by default

PGL will print all errors to stdout and you can set your own message handler with just like normal.

So what version of OpenGL is PortableGL? Shrug, it's still mostly 3.x but I will add things outside of 3.x as long as it makes sense to me and is in line with the goals and priorities of the project.

References

While I often used the official OpenGL documentation to make sure I was matching the spec as closely as realistically possible, what I used most, especially early on were a few textbooks.

The first was Fundamentals of Computer Graphics 3rd Edition which I used extensively early on to understand all the math involved, including the matrix transformation pipeline, barycentric coordinates and interpolation, texture mapping and more. There is now a 4th Edition and a soon to be released 5th Edition.

The second was the 5th edition of the OpenGL Superbible. I got this in 2010, right after OpenGL 3.3/4.0 was released, and used it for my college graphics course mentioned above. A lot of people didn't like this book because they thought it relied too much on the author`s helper libraries but I had no problems. It was my first exposure to any kind of OpenGL so I didn't have to unlearn the old stuff and all his code was free and available online so it was easy to look inside and not only see what actual OpenGL calls are used, but to then develop your own classes to your own preferences. I still use a class based on his GLFrame class for example.

In any case, that's the book I actually learned OpenGL from, and still use as a reference sometimes. I have a fork of the book repo too that I occasionally look at/update. Of course they've come out with a 6th and a 7th edition in the last decade.

Lastly, while I haven't used it as much since I got it years later, the OpenGL 4.0 Shading Language Cookbook has been useful in specific OpenGL topics occasionally. Once again, you can now get the expanded 3rd edition.

Similar/Related Projects

I'll probably add others to this list as I find them.

TinyGL is Fabrice Bellard's implementation of a subset of OpenGL 1.x. If you want something like PortableGL but don't want to write shaders, just want old style glBegin/glEnd/glVertex etc. this is the closest I know of. Also I shamelessly copied his clipping code because I'm not 1/10th the programmer Bellard was even as an undergrad and I knew it would "just work".

TinyGL Updated: An updated and cleaned up version of TinyGL that adds several fixes and features, including performance tuning and limited multithreading.

Pixomatic is/was a software implementation of D3D 7 and 9 written in C and assembly by Michael Abrash and Mike Sartain. You can read a series of articles about it written by Abrash for Dr. Dobbs.

TTSIOD is an advanced software renderer written in C++.

As an aside, the way I handle interpolation in PortableGL works as a semi-rebuttal of this article.

The answer is not the terrible strawman C approach he comes up with just to easily say "look how bad that is". The answer is that interpolation is

an algorithm, a simple function, and it doesn't care what the data means or how many elements there are. Pass it data and let the

algorithm do its job, same as graphics hardware does. While the inheritance + template functions method works ok if you only have a few "types" of data,

every time you think of some new feature you want to interpolate, you need to define a new struct and a new template function specialization.

Having a function/pipeline that just takes an arbitrary amount of float data to operate on takes less code and even has less runtime overhead

since it's a single function that interpolates all the features at once rather than having to call a function for each feature. See lines ~1200-1250

of src/gl_internals. Obviously it looks more complicated with all the other openGL stuff going on but you can see a simpler version on line 308 that's

used for interpolating between line endpoints instead of over a triangle. This is closer to his example but still longer because it has to support

SMOOTH, PERSPECTIVE and FLAT. You can see the shape of a straightforward implementation even there though, and the benefits of decoupling the

algorithm from the data it operates on.

Mesa3D is an open source implementation of OpenGL, Vulkan and other graphics APIs. It includes several different software renderers including the Gallium rasterizer (softpipe or llvmpipe depending on whether llvm is used) and Intel's OpenSWR.

libosmesa is an extraction and clean-up of the subset of Mesa3D 7.0.4 needed to support the old "swrast" software rasterizer and OSMesa offscreen renderer (both of which were removed from upstream Mesa3D in 2020.) libosmesa's intended purpose is to allow programs to offer a last-resort fallback renderer when system OpenGL capabilities are unavailable (or inadequate), while being fully self contained and buildable as simple C with no external dependencies (which is why the 7.0.4 version was chosen for ths purpose.) As that version of Mesa targets OpenGL v2.1, libosmesa may be a useful middle ground between PortableGL's OpenGL 3.x target and TinyGL's minimalist subset.

bbgl is just a very interesting concept. When I first saw it soon after it was published I was very frightened that it was exactly what PortableGL is but far more polished and from a better programmer. Fortunately, it is not.

pixman I feel like you could use them together or combine useful parts of pixman with PortableGL.

fauxgl "3D software rendering in pure Go. No OpenGL, no C extensions, no nothin'."

swGL A GPL2 multithreaded software implementation of OpenGL 1.3(ish) in C++. x86 and Windows only.

EmberGL An MIT licensed 2D/3D graphics library featuring a tiled software rasterizer.

SoftGLRender A OpenGL renderer/rasterizer in modern C++. The very impressive demo lets you toggle and change various features and settings including switching seamlessly between the GPU and the software renderer. AFAIK, this is the only project that lets you write shaders in C++ like PortableGL, however the process is more complicated since it has to work with the same class/API structure as real OpenGL and can't take the shortcuts that PortableGL does. An advantage of all the extra plumbing and complexity is the actual C++ shader code is more standardized, looks a little cleaner, and more like GLSL. Besides supporting many more features (though I didn't see gl_FragDepth?), it is also optimized with threading, and SIMD on x86. Given all the abstraction and extra overhead necessary to do what it does, it's impressive it manages to still be as fast as it is. As best I can tell, disabling features to get vaguely close to an apples to apples comparison, it's about as fast as PortableGL. So if you want OpenGL, don't need to use C, like modern C++/OOP style, and want software rendering while still being able to write your own shaders and avoid the old fixed-function mess, this might be the best option on this list (or a generic C++ OpenGL wrapper library linked with modern Mesa+llvmpipe).

TODO/IDEAS

- [ ] Render to texture; do I bother with FBOs/Renderbuffers/PixelBuffers etc.? See ch 8 of superbible 5

- [ ] Render to texture example program

- [ ] ~~Finish duplicating NeHe style tutorial programs from learningwebgl to opengl_reference and then porting those to use PortableGL~~ Port learnopengl instead, repo here WIP.

- [x] Port medium to large open source game project as correctness/performance/API coverage test (Craft done, other ideas)

- [ ] More texture and render target formats

- [ ] Logo

- [ ] Update premake scripts to Premake5 and handle other platforms once 5 is out of beta

- [x] Formal regression testing (WIP)

- [x] Formal performance testing (WIP)

- [ ] Formal/organized documentation

- [x] Integrated documentation, license etc. a la stb libraries